Australia has sent a clear message to the world’s biggest AI companies: If your technology isn’t safe for children, you will pay for it.

Under new proposals announced in March 2026, global AI providers could face penalties of up to $49.5 million if their systems expose children to harm, fail to implement safety controls, or ignore Australia’s child‑safe design expectations.

Why Australia Is Cracking Down Now

Three forces collided in early 2026:

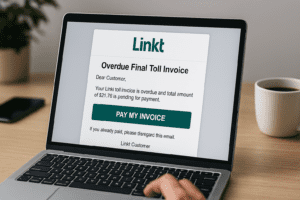

1. AI-generated grooming and impersonation scams surged. Children and teens are now targeted:

- AI‑generated “friend” personas

- Deepfake voice messages

- Hyper‑personalised phishing

- Automated grooming scripts that adapt in real time.

These threats bypass traditional filters and overwhelm parents, teachers, and frontline staff.

2. Big tech’s safety controls haven’t kept pace. Regulators argue that:

- Safety filters are inconsistent

- Age verification is weak

- Harm detection is reactive, not proactive

- Companies release features before assessing child impact

Australia is no longer willing to accept “we’re working on it” as an answer.

3. The eSafety Commissioner is expanding its global leadership. Australia already leads the world in:

- Safety by Design

- Mandatory reporting

- Child‑safe digital standards

The new fines reinforce that leadership and raise the bar for every AI provider operating here.

What the $49.5m Penalty Actually Means

The proposed framework would allow the government to fine AI companies if they fail to:

- Assess child safety risks before releasing new AI features

- Implement age‑appropriate safety controls

- Prevent harmful outputs, including grooming, violence, and sexual content

- Provide transparent reporting on safety incidents

- Respond quickly to child‑related harm reports

This is not a symbolic fine. It’s designed to force global AI companies to build safety into their products before they reach Australian children.

What This Means for Australian Organisations

Even though the fines target big tech, the ripple effects hit every sector Cybermate serves:

Schools

- Expect stricter requirements for AI tools used in classrooms

- More pressure to demonstrate safe adoption of generative AI

- Higher expectations for staff training on AI‑driven scams and impersonation

Charities & Community Organisations - Increased scrutiny on tools used with vulnerable youth

- Need for clear AI governance and safe‑use guidelines

- More demand for behavioural‑first digital safety programs

Small & Medium Businesses

- Staff will face more AI‑driven social engineering

- Deepfake voice scams targeting finance and HR will rise

- Organisations must show they’re using AI responsibly and safely

Enterprise

- AI governance frameworks will become mandatory

- Regulators will expect proactive risk assessments

- Human‑risk controls will matter as much as technical controls

Why Behaviour Matters More Than Ever

The new fines focus on technology, but the real risk remains human behaviour.

Most AI‑enabled harm happens because:

- Someone trusts the wrong message

- Someone clicks the wrong link

- Someone believes a deepfake voice

- Someone shares information with the wrong “person”

This is why Cybermate exists.

We teach staff, students, and communities how to recognise:

- AI‑generated scams

- Deepfake voices

- Manipulative chatbots

- Social engineering powered by generative AI

Australia Is Leading the World – Again

The $49.5m fines are not about punishing big tech. They’re about setting a global standard for child‑safe AI.

It’s a bold move that aligns perfectly with Cybermate’s mission to make Australia the most cyber-secure nation on earth.